Daily Papers

AUTOACT: Automatic Agent Learning from Scratch via Self-Planning( paper )

Language agents have achieved considerable performance on various complex tasks. Despite the incessant exploration in this field, existing language agent systems still struggle with costly, non-reproducible data reliance and face the challenge of compelling a single model for multiple functions. To this end, we introduce AutoAct, an automatic agent learning framework that does not rely on large-scale annotated data and synthetic trajectories from closed-source models (e.g., GPT-4). Given limited data with a tool library, AutoAct first automatically synthesizes planning trajectories without any assistance from humans or strong closed-source models. Then, AutoAct leverages a division-of-labor strategy to automatically differentiate based on the target task information and synthesized trajectories, producing a sub-agent group to complete the task. We conduct comprehensive experiments with different LLMs, which demonstrates that AutoAct yields better or parallel performance compared to various strong baselines. We even notice that AutoAct, when using the Llama-2-13b model, can achieve performance comparable to that of the GPT-3.5-Turbo agent.

Investigating Data Contamination for Pre-training Language Models( paper )

Language models pre-trained on web-scale corpora demonstrate impressive capabilities on diverse downstream tasks. However, there is increasing concern whether such capabilities might arise from evaluation datasets being included in the pretraining corpus — a phenomenon known as data contamination — in a manner that artificially increases performance. There has been little understanding of how this potential contamination might influence LMs’ performance on downstream tasks. In this paper, we explore the impact of data contamination at the pre-training stage by pre-training a series of GPT-2 models from scratch. We highlight the effect of both text contamination (i.e. input text of the evaluation samples) and ground-truth contamination (i.e. the prompts asked on the input and the desired outputs) from evaluation data. We also investigate the effects of repeating contamination for various downstream tasks Additionally, we examine the prevailing n-gram-based definitions of contamination within current LLM reports, pinpointing their limitations and inadequacy. Our findings offer new insights into data contamination’s effects on language model capabilities and underscore the need for independent, comprehensive contamination assessments in LLM studies.

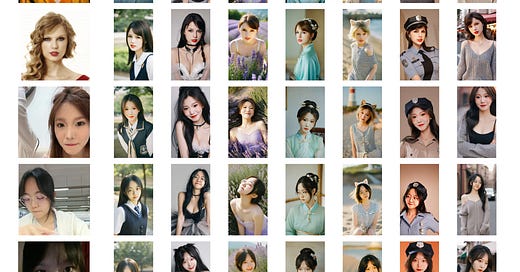

InstantID: Zero-shot Identity-Preserving Generation in Seconds ( paper | webpage )

There has been significant progress in personalized image synthesis with methods such as Textual Inversion, DreamBooth, and LoRA. Yet, their real-world applicability is hindered by high storage demands, lengthy fine-tuning processes, and the need for multiple reference images. Conversely, existing ID embedding-based methods, while requiring only a single forward inference, face challenges: they either necessitate extensive fine-tuning across numerous model parameters, lack compatibility with community pre-trained models, or fail to maintain high face fidelity. Addressing these limitations, we introduce InstantID, a powerful diffusion model-based solution. Our plug-and-play module adeptly handles image personalization in various styles using just a single facial image, while ensuring high fidelity. To achieve this, we design a novel IdentityNet by imposing strong semantic and weak spatial conditions, integrating facial and landmark images with textual prompts to steer the image generation. InstantID demonstrates exceptional performance and efficiency, proving highly beneficial in real-world applications where identity preservation is paramount. Moreover, our work seamlessly integrates with popular pre-trained text-to-image diffusion models like SD1.5 and SDXL, serving as an adaptable plugin.

Scalable Pre-training of Large Autoregressive Image Models( paper | code )

An Experimental Design Framework for Label-Efficient Supervised Finetuning of Large Language Models ( paper )

AI News

1.LeftoverLocals: Listening to LLM responses through leaked GPU local memory( link )

2.Chip Huyen:Sampling for Text Generation ( link )

3.A Guide to Large Language Model Abstractions ( link )

AI Repos

1.Open TTS Tracker:A one stop shop to track all open-access/ source TTS models as they come out. ( repo )

2.Prompt Engineering Guide ( repo )

3.surya:Accurate line-level text detection and recognition (OCR) in any language ( repo )